$ cd /projects/betsquid

betsquid.com — Automated Horse Racing Betting System

GitHub: private (646 commits, Rails 4.2/JRuby)

PRE — Idea · Setup · Build

Goal: Build an automated betting system that exploits statistical patterns in UK horse racing markets. Not gambling. Algorithmic trading on betting exchanges. A friend came up with the core idea and an algorithm: a recovery-based betting strategy where the statistical probability of winning within 9 consecutive races was high enough to be profitable. If you lose, you re-bet with adjusted stakes to recover the loss in the next race. The math said it worked. The math was mostly right. I was the engineer. He was the domain expert. The plan: build the platform, prove the algorithm, find investors, scale it, maybe sell it to a Malta-based betting company. I coded the entire thing while traveling for freelance work. In a comfy lounge chair in a hotel lobby, sipping on a glass of white wine. The algorithm variants were named during late- night brainstorming sessions: Kermit, Topcat, John, Kylie, Steve, Britney, Bob. Each one a different tuning of the same core strategy. I have no idea which ones were named after real people and which ones were just funny at 11pm with a whisky. Stack: - Ruby on Rails 4.2 on JRuby 9.0.5.0 - MySQL database (34MB+ of race data) - Betfair REST API (primary exchange) - BetDAQ SOAP/XML API (secondary exchange) - Sidekiq for background job processing - Clockwork scheduler for automated daily runs - Puma application server - PayPal Recurring for subscription billing - Gruff for odds history graph generation - Capistrano for deployment - 643MB of historical odds graphs - Certificate-based Betfair authentication

The Algorithm

The system wasn't picking winners. It was detecting market

movement and exploiting price inefficiencies.

THE CORE: STEAM DETECTION

A "steam" in betting markets is a rapid odds drop —

the price collapses because informed money is flooding in

before a race. The system watched for these patterns:

1. Pull today's UK races from Betfair at 11:00am

2. Track odds movements from 10:30am (when racing info

drops and the market starts moving)

3. Identify "steam" candidates — horses with starting

odds < 3.75 whose price is dropping rapidly

4. Place a BACK bet at the steamed-down price

5. Wait for DRIFT (price recovery)

6. Place a LAY bet to lock in profit regardless of the

actual race outcome

Back low, lay high. The spread is profit. The race result

is irrelevant if both legs execute.

THE BRAIN: STEAMYBRAIN (476 lines)

The algorithm engine that built the complete Betfair odds

ladder (1.01 to 10.0, with varying tick sizes per range),

defined steam thresholds for every starting price bracket,

and configured drift tolerances. Every price range had its

own entry/exit parameters. Deeply tuned. Multiple config

versions for A/B testing different strategies.

THE EXECUTOR: KERMIT (279 lines)

The back-and-lay arbitrage engine. Places the BACK bet when

steam is detected. Monitors for drift. Places the LAY bet

when recovery begins. Aborts if the price crashes below the

floor. Operates in a 3-minute pre-race window. Calculates

lay stakes dynamically based on the back entry price.

THE BACKTESTER: STEAMWORKS (215 lines)

Monte Carlo-style simulation engine. Replayed millions of

historical bets across years of data. Tweakable parameters

for sensitivity, entry thresholds, drift tolerance. Generated

CSV reports and P&L curves. This was the R&D lab where

strategies were born and killed.

All of this — built without AI, without machine learning

libraries, without Python notebooks. Pure Ruby, pure logic,

pure pattern recognition. Data crunching across millions of

records from the comfort of a hotel lobby.

The Cross-Exchange Play

The system tracked both Betfair and BetDAQ simultaneously. Same races, same horses, different odds. A "Supermatch" model handled identity reconciliation across exchanges — matching the same horse in the same race on both platforms. This enabled cross-exchange arbitrage: back at one exchange, lay at the other, guaranteed profit from the price difference. The BetDAQ integration used SOAP/XML (because of course it did — this was the era). The Betfair integration used REST with SSL certificate authentication. Both APIs polled every 10 seconds during active racing. Sidekiq workers processed candidates in parallel.

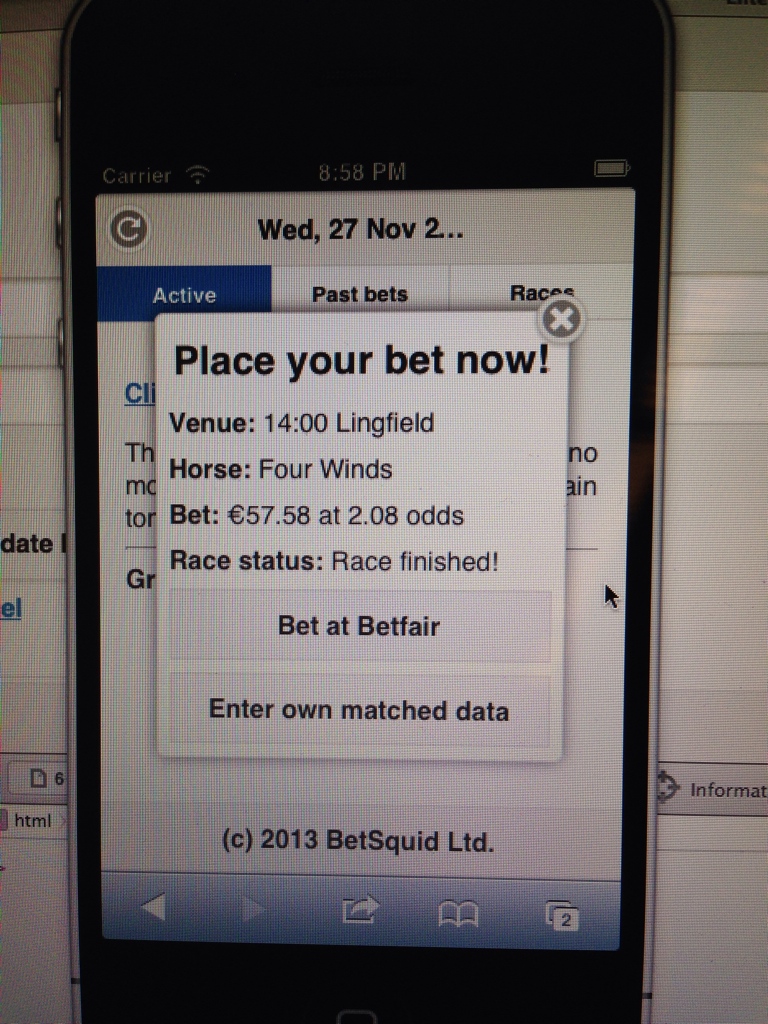

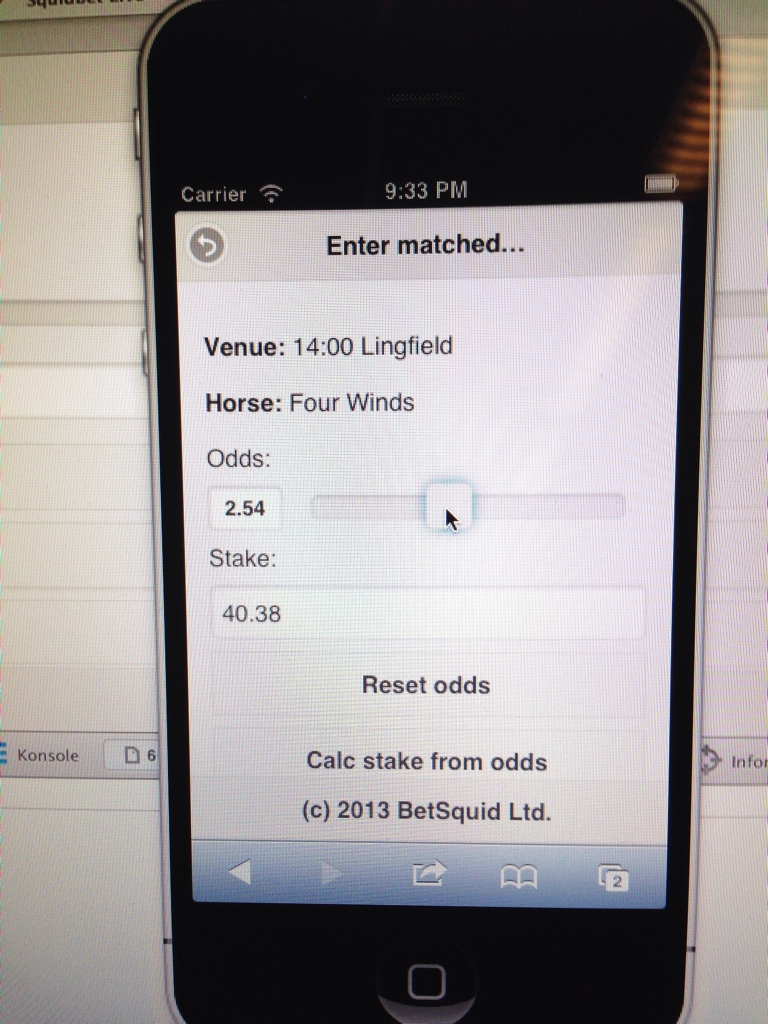

The Business Model

BetSquid was designed as a subscription product:

- Web dashboard with real-time odds updates

- Historical performance graphs per runner per market

- Manual bet placement and automated execution modes

- Tiered plans: Basic (view only) and Pro (autobet)

- PayPal recurring billing

- Tiered betting days: bt1 through bt5, with daily loss

limits and state machine progression

The idea was: prove the algorithm, show the P&L charts,

sell subscriptions to punters who want to automate their

betting. My partner built presentations. Designed a cool

logo. Approached Malta-based betting companies. We had big

goals.

Nobody invested. Nobody subscribed. The product was built.

The marketing never happened. Classic Dan: "you gotta have

the product first." So I built it. All 646 commits of it.

And we never sold it.

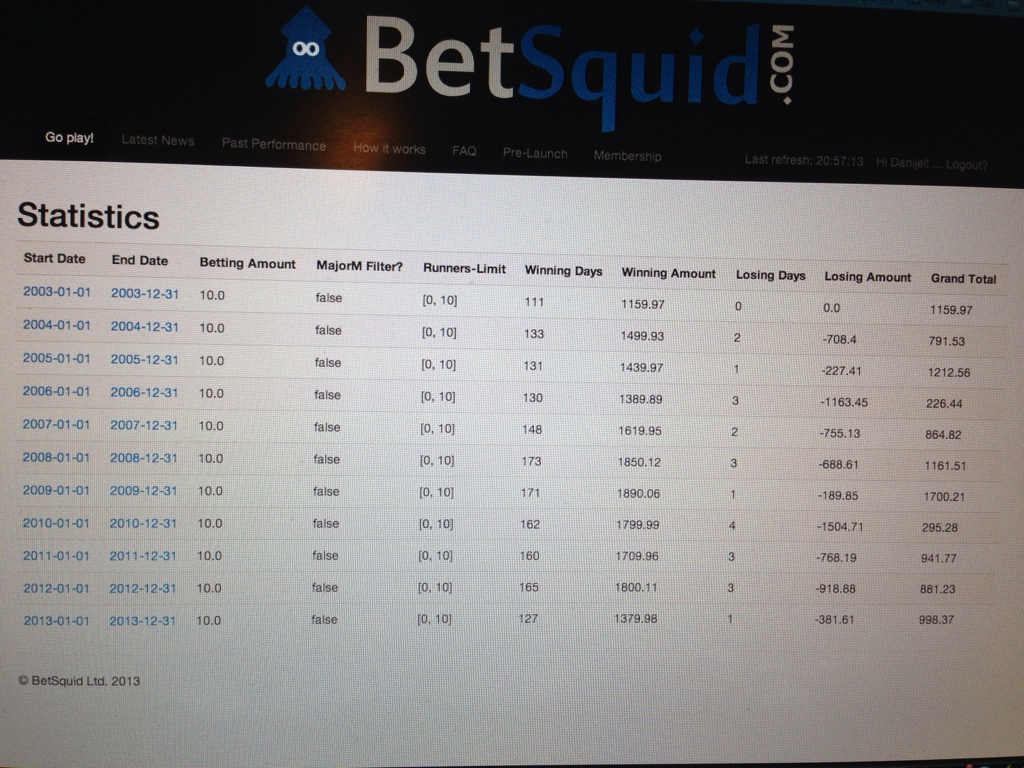

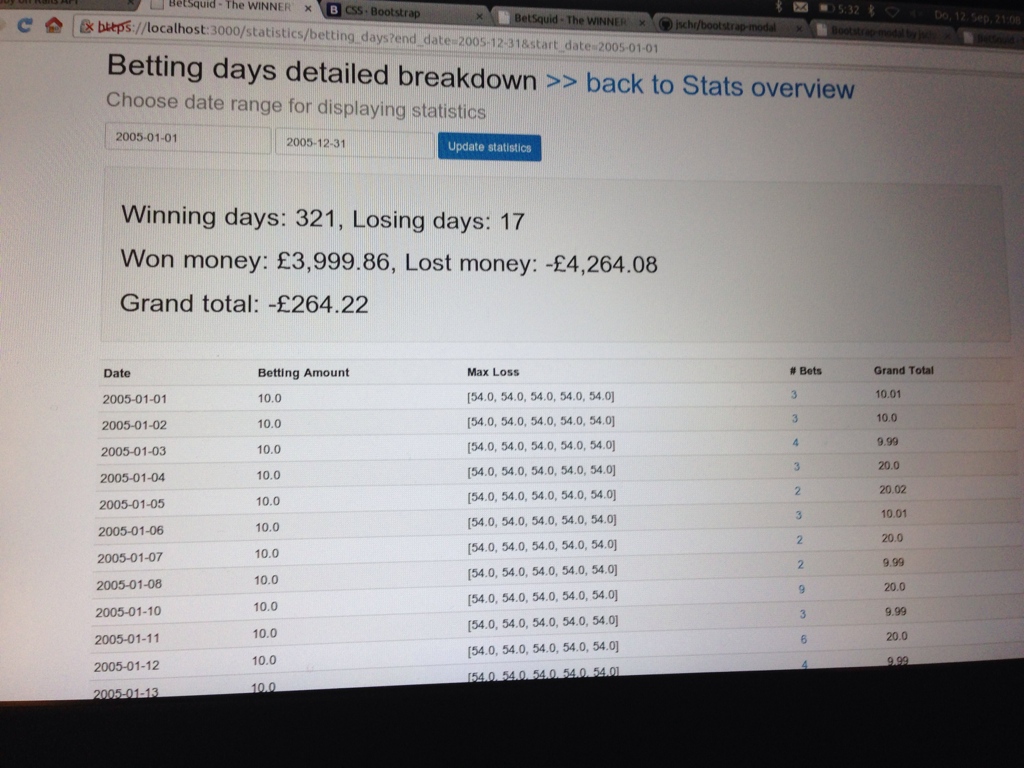

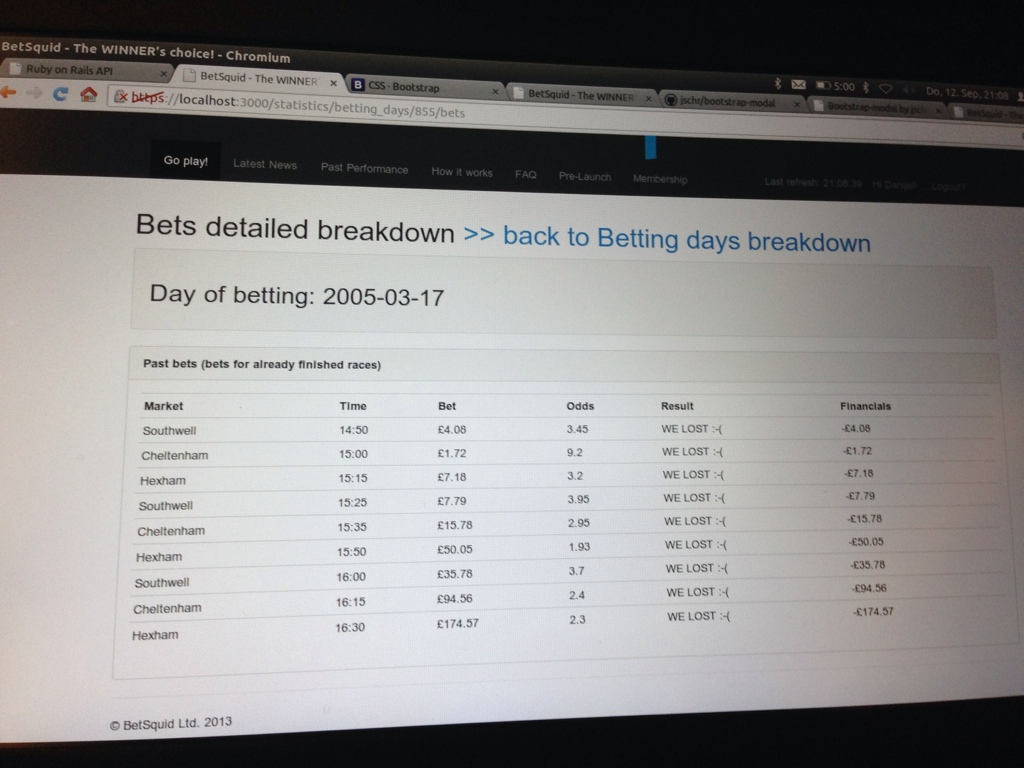

Screenshots — The Dashboard & The Data

POST — Learnings · Afterthoughts · Timeline

What happened:

It worked. Until it didn't.

The algorithm was sound in backtesting. The Monte Carlo

simulations showed consistent profitability across years of

historical data. Live trading confirmed it — for a while.

Money was being made. The math was holding.

Then the edge cases arrived. Markets are manipulated. Weather

changes cause unpredictable results (a muddy track in

Cheltenham rewrites every probability). The recovery strategy

that "statistically guaranteed" a win within 9 races hit

streaks that the statistics said were improbable but the

universe didn't care about statistics.

The investor search went nowhere. Malta betting companies

weren't interested. The presentation deck gathered dust. And

eventually, like every pattern in this archive, the energy

moved elsewhere.

But the data crunching — millions of bets analyzed for

patterns, Monte Carlo simulations across years of historical

data, odds ladder construction, cross-exchange arbitrage logic

— all of that was built by one person in Ruby, without

AI, without ML frameworks, without anything except logic and

persistence and whisky. That counts for something.

Learnings:

- Algorithmic betting on exchanges is algorithmic trading

with worse infrastructure and better stories. The math is

the same. The domain knowledge required is immense.

- Backtesting is seductive and dangerous. A system that shows

consistent profit across 5 years of historical data can

still blow up in month 6 of live trading. The market adapts.

The weather doesn't cooperate. The tail events arrive.

- Recovery-based betting strategies (martingale variants) work

brilliantly until they don't. The statistical probability

of winning within N races is high. But "high probability"

is not "certainty," and when the streak hits, the losses

compound exactly as fast as the strategy assumed they

wouldn't.

- 646 commits over 5.5 years, coded in hotel lobbies across

Europe during freelance travel, is either the most dedicated

or the most portable development process imaginable. Probably

both.

- The algorithm names — Kermit, Topcat, John, Kylie,

Steve, Britney, Bob — are a testament to late-night engineering decisions.

Some of the best code is written in the worst conditions.

- "Build it and they will come" continues to be the most

persistent and most wrong assumption in my career. Nobody

comes. You have to go get them. Marketing is always the

bottleneck. Always.

- The cross-exchange arbitrage concept (back at Betfair, lay

at BetDAQ) was genuinely clever. Two different markets,

same event, guaranteed spread. The execution was the hard

part — latency, liquidity, odds ladder alignment.

Timeline:

- 31 Jul 2013: First commit. The journey begins.

- 2013-2014: Core platform built. Betfair + BetDAQ APIs

integrated. Steam detection algorithm developed. 34MB of

race data accumulated. Coded in hotel lobbies across Europe.

- 2014-2015: Cross-exchange matching ("Supermatch"). PayPal

billing. Tiered betting days. Compound betting logic.

Live product running with real money.

- 2015-2017: Backtesting engine (SteamWorks). Monte Carlo

simulations across millions of historical bets. Algorithm

variants tested: Topcat, John, Kylie, Steve, Britney, Bob.

- 2018: Kermit (back-and-lay arbitrage engine). SuperKermit.

SteamBomb workers for distributed processing. The most

sophisticated version of the system.

- Feb 2019: Final commit ("lay works"). Development stops.

- 643MB of historical odds graphs. 34MB+ of race data.

Millions of simulated bets. Zero paying customers.

Status: Complete. 646 commits of algorithmic betting infrastructure,

built in hotel lobbies across Europe between freelance gigs. The

steam detection algorithm worked. The backtesting infrastructure

is production-quality. The market timing and marketing didn't

align. The engineering did.